Why AI Won't Replace Labor

The hidden bottlenecks that keep humans at the center of the economy

Everyone is modelling a world where AI eats white-collar employment whole. Here are some reasons that thesis may be badly wrong, and why the more boring outcome is STILL likely in my view.

Both Capital Flows & Citrini have done an exceptional job of helping me understand the changes in AI and how to think about the shifts. Like any thesis I consider, I want to know what the path in the OPPOSITE direction looks like.

The AI doom-loop scenario has become the default mental model in macro circles. The argument logic is: AI displaces white-collar workers, workers spend less, companies invest more in AI to protect margins, AI displaces more workers. Rinse. Collapse.

I think this particular catastrophe is considerably less inevitable than the consensus believes.

This is going to be a piece arguing that transformative and catastrophically disruptive are not the same thing, and that the history of technology is far more littered with overhyped displacement predictions than actual displacement events.

1) The Capability-Deployment Gap Is Enormous & Structural

Every technology forecasting error of the last century shares a common structure: the gap between what a technology can do in a lab and what it actually does inside a real organisation is measured in decades.

Nuclear power was commercially viable in 1958. It still generates 19% of US electricity (less than it did in the 1990s). Autonomous vehicles were “five years away” in 2014. In 2026, they remain a novelty outside controlled corridors. The internet made physical retail “obsolete” in 1999. Physical retail still accounts for over 80% of consumer spending today.

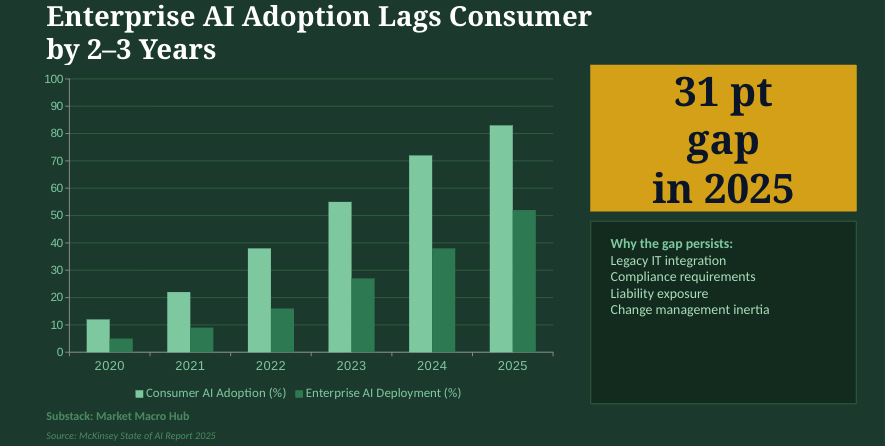

AI is genuinely impressive. But deploying it inside the messy reality of a Fortune 500 with legacy IT infrastructure, compliance requirements, union contracts, regulatory obligations, and liability concerns, is a fundamentally different problem than running a demo. The enterprises that appear most exposed are precisely the ones with the most institutional friction slowing adoption.

“The pessimists of every era predicted machines would render workers redundant. They were right about the machines and wrong about the workers.”

The displacement scenario requires simultaneous deployment of AI across sectors with massively different regulatory environments (it’s not a one-size-fits-all), and organisational cultures. Enterprise tech spreads unevenly and with far more friction than the demos suggest.

2) AI Makes Labor More Productive

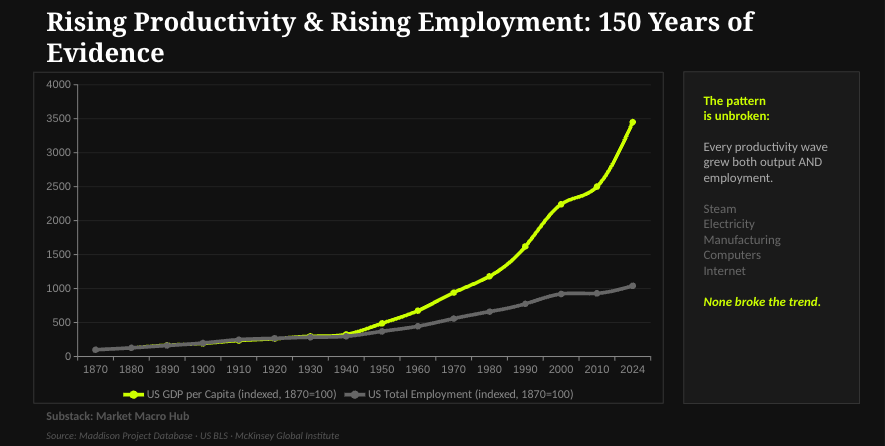

The assumption of the doom-loop is that AI substitutes for labor. The more probable outcome (and the one consistent with two centuries of technological history) is that AI complements labor, raising its productivity and its value.

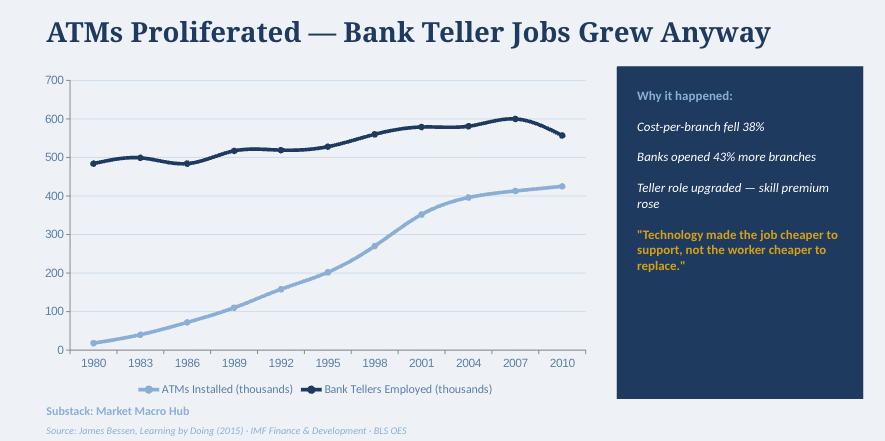

The ATM was supposed to end bank tellers. Instead, ATMs reduced the cost of running a branch, which led banks to open more branches, which created more teller jobs. Word processing was supposed to eliminate secretarial work. It eliminated some rote tasks and expanded the universe of things a skilled administrative professional could do. Excel was supposed to end financial analysts. Instead, it made financial analysis so cheap to produce that demand for it exploded. You get the point…

In each case, the tech made individual workers more productive at their existing tasks and simultaneously created demand for entirely new tasks that only humans could fill.

The argument that “this time is different” because AI can learn new tasks is valid. But it also cuts the other way (a path I am mapping): an AI that makes every human worker three times as productive is not a labor replacement, it’s a labor multiplier that raises wages and living standards.

3) The “Last Mile” Problem Is Harder Than It Looks

AI performs impressively on the 80% of tasks that are well-defined, well-documented, and operate in structured environments. But it struggles badly on the 20% that aren’t, and in most professional contexts that final 20% is where most of the actual value resides. People will say that we’ll move to a 90/10 split, but it doesn’t kill the fact that there is a percentage outside of structured environments that AI isn’t fixing. Intuition and critical thinkers are needed.

A coding agent can generate functional software from a specification. It cannot navigate the internal politics of an organisation to understand what the specification should actually say. A legal AI can draft a contract. It cannot read the room in a negotiation, build the client relationship that made the negotiation possible, or exercise the judgment about when walking away is the right move. A financial AI can model a transaction. It cannot build the trust with a board of directors that gives a banker the mandate to advise on it. Once again, you get where I’m going with this.

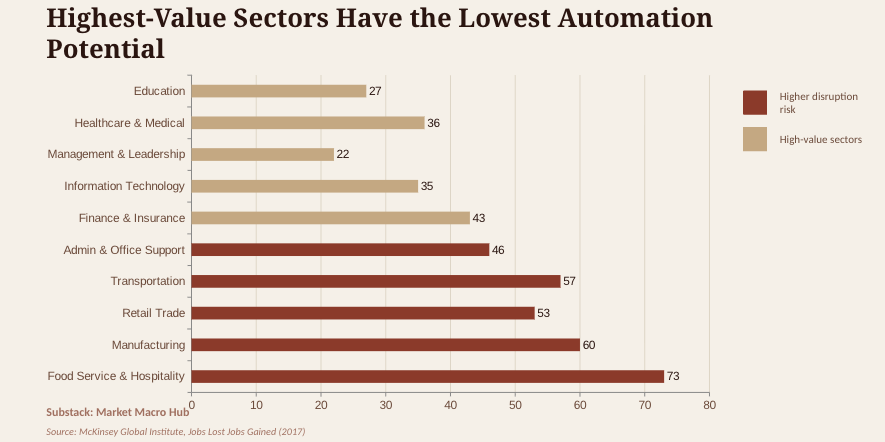

This reflects something fundamental about the nature of organisational work: a large fraction of professional value creation is embedded in relationships, judgment (the biggest one), and context that doesn’t sit in any training set. The tasks most amenable to AI automation are, almost by definition, the lowest-value tasks in any professional’s portfolio.

What AI is most likely to do is compress the time professionals spend on low-value tasks, freeing them to do more of the high-value work that justifies their salaries. Will some jobs be lost? NO DOUBT. But to what extent is exactly what I’m arguing.

4) Demand for Expertise Expands When It Gets Cheaper to Access

One of the most consistent findings in economics is that when the price of a service falls, demand for it tends to rise, often dramatically. The displacement scenario treats the demand side as fixed. It almost certainly isn’t.

Today, only large corporations can afford sophisticated legal counsel, high-end financial advisory, or expert medical opinions. If AI makes legal and financial expertise an order of magnitude cheaper to access, the most likely outcome is that the market for their services expands dramatically to include the millions of businesses and individuals currently priced out.

Small businesses have historically operated without proper accounting, without legal review of contracts, without strategic financial planning, not because they don’t want these things but because the cost was prohibitive. A world where every SME has access to CFO-quality financial analysis is not a world with fewer financial analysts. It’s a world with more of them, serving a vastly larger market.

One thing that is key to mention is that AI is sector specific and will have different impacts at different stages in different parts of the economy.

“The demand for intelligence doesn’t disappear when it gets cheaper. It explodes.”

This is the pattern with every technology that creates access to previously scarce expertise. The internet created more content, more journalists, and more niche publications than any previous era in history.

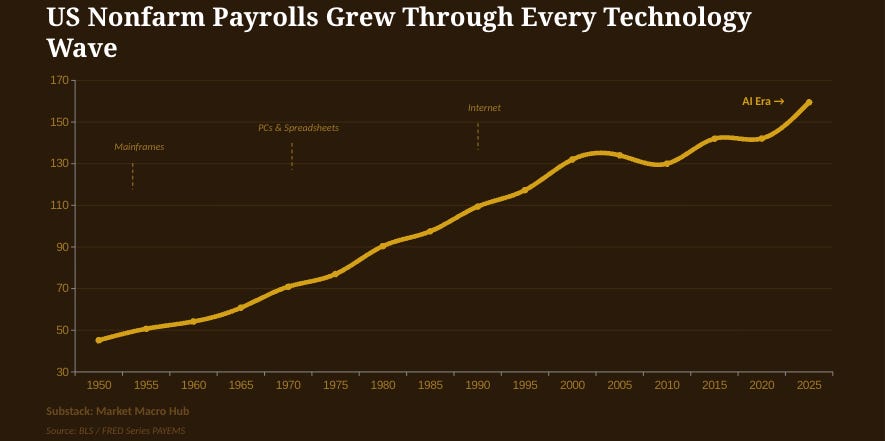

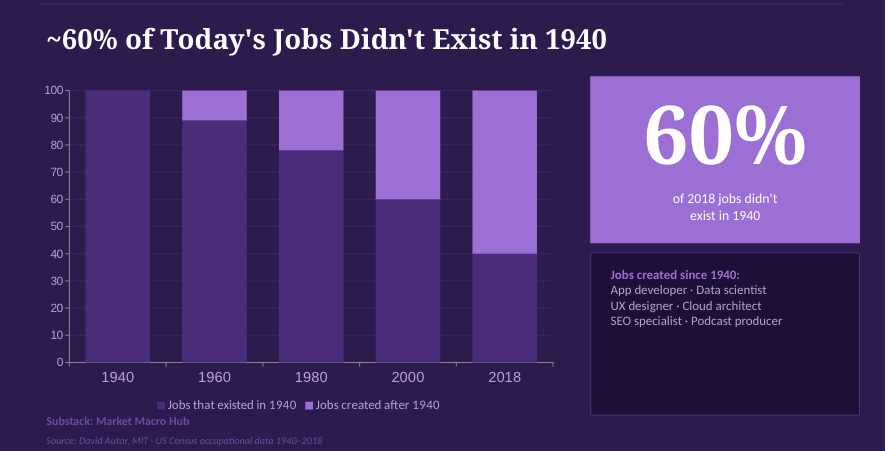

If 60% of today’s jobs didn’t exist before a period of time (1940-now) where MASSIVE evolution of tech happened, then I find it hard to believe that such an extreme in unemployment will happen in the development of AI.

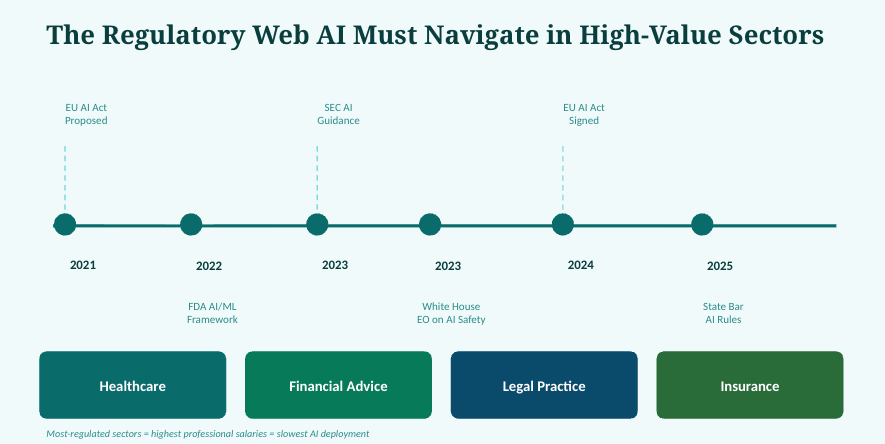

5) Regulatory and Liability Constraints Will Slow Deployment in High-Stakes Sectors

The sectors most prominently featured in the displacement narrative (financial advice, legal work, healthcare, insurance) are also the most heavily regulated sectors in the economy which I touched on earlier. They are regulated precisely because the consequences of errors are severe, and because consumers cannot easily evaluate the quality of advice they receive.

Regulators do not move at the pace of demo videos. The FDA has spent five years developing frameworks for AI in medical devices and the SEC is still working through the implications of AI in financial advice, which tells us they could tighten regulations further.

This isn’t an argument that regulation will stop AI at all by the way. What I’m saying is that it will slow it dramatically in the highest-value professional service segments, while AI accelerates in less regulated domains. The result is a gradual, sector-by-sector restructuring (not simultaneously).

Add liability. When an AI-generated legal brief loses a case, who gets sued? When an AI financial model costs a pension fund billions, who bears the liability? When an AI diagnostic misses a cancer, who pays? Until these liability frameworks are resolved (and they won’t be resolved quickly), organisations face strong incentives to keep humans in every loop where the consequences of error are significant.

6) The Reliability Gap Between “Impressive Demo” and “Production System” Remains Wide

Current AI systems are genuinely impressive at generating plausible-looking outputs. They are considerably less impressive at reliably generating correct outputs in high-stakes, adversarial, or edge-case environments.

Hallucination rates in production AI systems remain significant. Models that perform well on benchmarks often degrade sharply on real-world tasks with distribution shifts from their training data. Agentic systems that work reliably in demos frequently fail in unpredictable ways when deployed at scale. These aren’t corner-case concerns they’re the primary reason enterprise AI adoption continues to lag consumer AI adoption by years.

Organisations with significant reputational capital at stake (banks, law firms, hospitals, consulting firms) have low tolerance for failure modes they can’t predict or explain. The more consequential the decision, the higher the bar for reliability. Current AI systems do not clear that bar in most high-stakes professional contexts, and the path to clearing it is not obvious.

Key Constraint

The economic value of professional work is disproportionately concentrated in decisions where errors are catastrophic (M&A transactions, medical diagnoses, legal strategy, credit underwriting). These are precisely the decisions where AI’s unreliability is most costly and most visible. The value-to-risk calculus strongly favors keeping humans responsible for these decisions, even as AI handles other tasks.

7) The Economy’s Response to Technological Abundance Has Historically Been Expansion, Not Collapse

The deepest flaw in the displacement spiral thesis is its treatment of aggregate demand as a fixed quantity being competed over. Economies don’t work that way. When technology makes things cheaper to produce, it creates purchasing power for new things that didn’t previously exist.

The industrial revolution actually CREATED consumer demand. Most of what we now consider necessities (indoor plumbing, refrigeration, automobiles, air travel) didn’t exist before industrialisation. The workforce also grew dramatically alongside an explosion in the range of goods and services people demanded.

If AI genuinely does raise productivity substantially, the most likely outcome is a rising standard of living, falling prices for AI-intensive services, and a reallocation of consumer spending toward new categories of goods and experiences that we can’t fully anticipate today. The history of technology is a history of this reallocation happening continuously, with net positive effects on employment and living standards.

The alternative (that humans will simply stop wanting things when tech makes existing things cheaper) has no historical precedent. Human desire for comfort, connection, status, experience, and novelty has proven remarkably expansive. The productivity gains from AI are more likely to create new categories of demand in my view.

What I’m Actually Watching For

None of this means the transition is costless or that policy responses are unnecessary. Technological transitions create real winners and losers, often with significant distributional consequences. There are workers in specific occupations who will need to retrain. There are regions economically dependent on industries facing disruption. These are legitimate policy concerns that deserve serious attention.

The question is whether these transitional costs cause a macroeconomic catastrophe or a manageable (if painful) restructuring. My base case is the latter.

The conditions that would push me toward the more catastrophic scenario are:

First, a step change in AI reliability that allows autonomous agents to make high-stakes decisions without human oversight across a wide range of domains simultaneously. We’re not there. The question is whether we get there faster than institutions can adapt.

Second, a regulatory environment that fails to impose meaningful liability frameworks for AI-assisted professional decisions, creating incentives for organisations to eliminate human oversight prematurely.

Third, an acceleration in AI capability that outpaces the demand expansion effect (most likely if any happen), such that the new jobs and industries created by AI genuinely cannot absorb the workers displaced. This would be historically unprecedented, but the pace of current capability improvement merits attention.

I’m watching all three. None have materialised yet. And until they do, the more probable outcome remains what it has always been when powerful new technologies arrive: disruption and restructuring (messy, uneven, and ultimately generative).

“The canary is still alive. But so is the economy.”